Quandela researchers demonstrate how photonic quantum generative models can be trained efficiently on classical hardware and then deployed on quantum photonic devices, providing a practical path toward scalable quantum machine learning.

Efficient Training: a Key Challenge in Quantum Machine Learning

Efficient training has long been one of the main challenges in quantum machine learning. While quantum circuits can be highly expressive in theory, optimizing them in practice often becomes computationally expensive as the number of parameters grows, due to the cost of evaluating the gradient of the loss function.

In a recent paper, Quandela researchers present a method for efficient training of photonic quantum generative models. Their approach allows photon-native generative models to be trained on classical hardware using modern machine learning tools, while preserving a genuinely quantum deployment phase through boson sampling. This combination shows a promising route toward practical generative photonic quantum machine learning.

How the Method Works

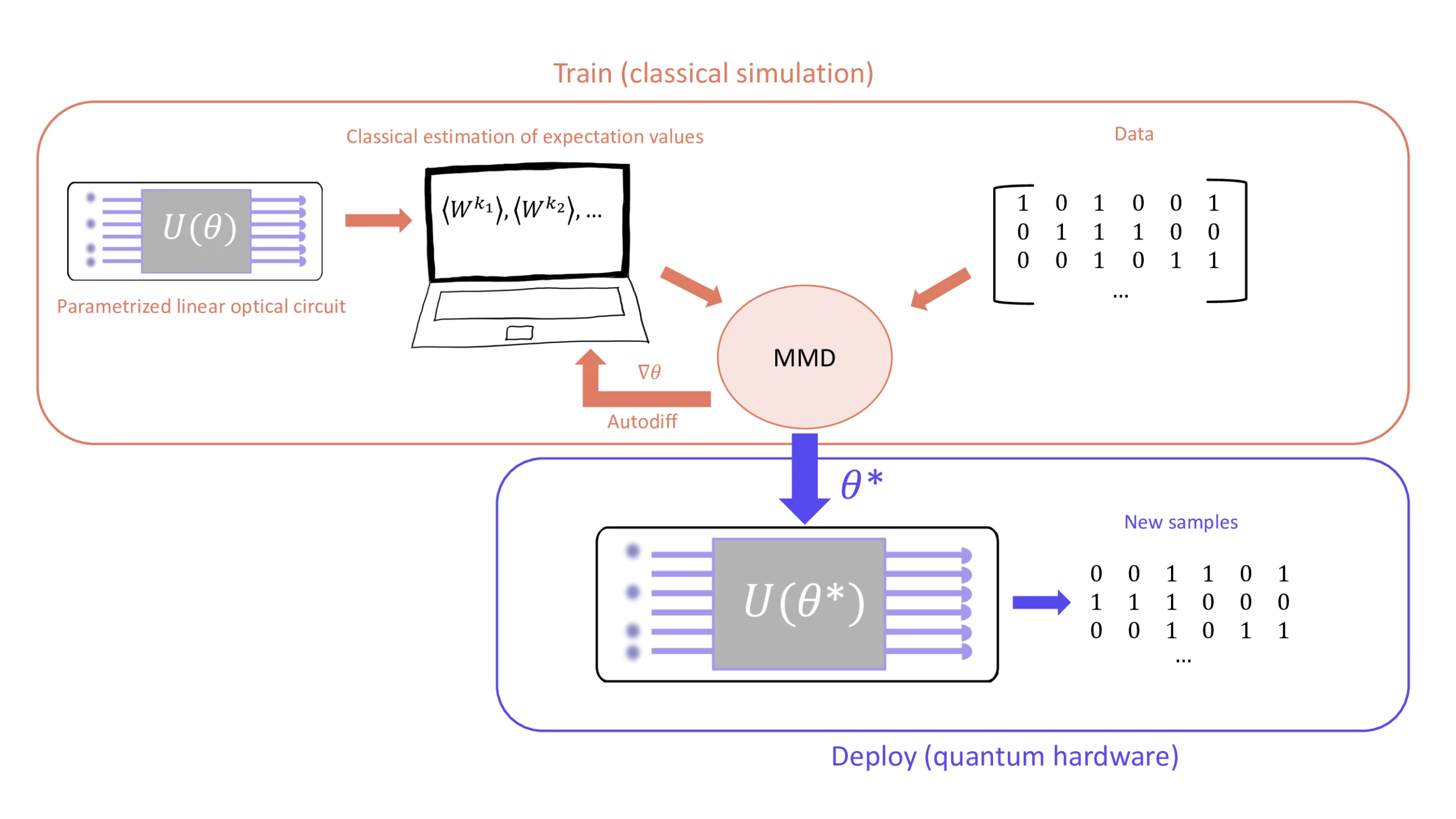

The paper introduces a training strategy based on the maximum mean discrepancy (MMD), a standard metric in machine learning for comparing probability distributions. Here, the MMD quantifies how closely the samples produced by the model match those from a target dataset.

The key contribution is that the loss function can be expressed in a form compatible with linear optics and efficiently estimated on classical hardware. This enables gradient-based learning with automatic differentiation, which is essential in modern machine learning workflows. As a result, the heavy optimization work can be done classically.

Classical Training, Quantum Deployment

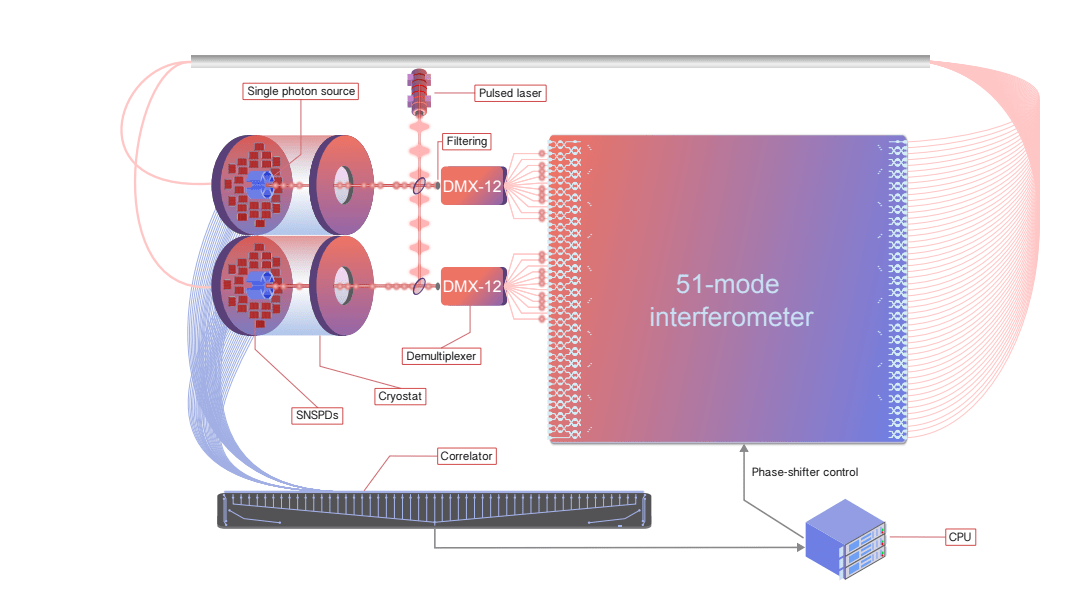

Efficient classical training does not mean there is no potential for quantum advantage. Indeed, at inference time, the learned model remains a genuinely photonic quantum model and is very likely to require deployment on real quantum hardware. In practice, the trained model generates new samples by running the photonic circuit and measuring the output.

In fact, this deployment stage corresponds to boson sampling, a photonic task widely believed to be hard to simulate classically at scale. While the original idea of training on classical hardware and deploying on quantum devices was first proposed by Xanadu researchers in “Train on classical, deploy on quantum: scaling generative quantum machine learning to a thousand qubits” this work extends the approach to photonic quantum circuits.

Overall, this approach makes it possible to combine practical classical optimization with quantum-native sampling at deployment.

Results and Applications

Simulations were performed with up to 16 photons, 256 modes, and more than 100,000 parameters, trained efficiently on a laptop. These results show that large photonic generative models can already be optimized using very realistic computational resources. With access to larger classical resources, such as high-performance computing clusters, much larger models or more complex datasets could be explored in future work.

Performance depends also on circuit architecture and initialization strategies. The so-called butterfly and Haar-compatible ansätze show stronger convergence, while warm starts and near-identity initializations can improve training stability.

The models were evaluated on several types of datasets, including boson-sampling data, user-preference data, and bioinformatics datasets encoded as fixed-Hamming-weight bitstrings. The best results are obtained when the structure of the data naturally matches photonic sampling processes, especially in the case of boson-sampling datasets.

These results suggest that photonic quantum generative models may be particularly effective for quantum datasets and in particular photonic datasets, which matches a trend observed in the quantum machine learning community.

Relevance for the MerLin Ecosystem

This approach integrates naturally within Quandela’s MerLin framework for photonic and hybrid quantum machine learning and builds on Perceval, the platform for discrete-variable photonic quantum computing.

In particular, this method complements existing MerLin capabilities rather than replacing them. It adds a new tool for training generative photon-native models efficiently while remaining fully compatible with the broader software ecosystem.

Main Takeaways

- Photonic quantum generative models can be trained efficiently using a classical optimization loop based on the MMD.

- Deployment remains quantum via boson sampling, preserving the potential for quantum advantage.

- The method scales to large systems, making it relevant beyond toy models.

- The best results are observed when target data naturally aligns with photonic structures.

- The approach integrates within the MerLin ecosystem, offering a practical workflow for researchers and developers.

Conclusion: a practical path to scalable photonic quantum generative models

Efficient training is critical for practical quantum machine learning. By combining classical optimization with photon-native deployment, Quandela demonstrates a credible route toward scalable photonic quantum generative models.

Future milestones include deployment on real hardware, noise-aware training strategies, benchmarking across broader applications, and exploring the use of larger classical computational resources to train more complex models or larger datasets. The key insight is clear: training photonic quantum generative models efficiently is now within practical reach, marking an important step forward for photonic quantum machine learning.

References

[1] Felix Gottlieb, Rawad Mezher, Brian Ventura, Shane Mansfield, and Alexia Salavrakos. “Efficient training of photonic quantum generative models.” arXiv:2603.08793 (2026).

[2] Cassandre Notton, Benjamin Stott, Philippe Schoeb, Anthony Walsh, Grégoire Leboucher, Vincent Espitalier, Vassilis Apostolou, Louis-Félix Vigneux, Alexia Salavrakos, and Jean Senellart. “MerLin: A discovery engine for photonic and hybrid quantum machine learning.” arXiv:2602.11092 (2026).

[3] Nicolas Heurtel, Andreas Fyrillas, Grégoire de Gliniasty, Raphaël Le Bihan, Sébastien Malherbe, Marceau Pailhas, Eric Bertasi, Boris Bourdoncle, Pierre-Emmanuel Emeriau, Rawad Mezher, Luka Music, Nadia Belabas, Benoît Valiron, Pascale Senellart, Shane Mansfield, and Jean Senellart. “Perceval: A software platform for discrete variable photonic quantum computing.” Quantum, 7:931 (2023).

[4] Erik Recio-Armengol, Shahnawaz Ahmed, and Joseph Bowles. “Train on classical, deploy on quantum: scaling generative quantum machine learning to a thousand qubits.” arXiv:2503.02934 (2025).