PUBLICATIONS

Discover the work of our talented researchers

"It's by logic that we prove, but by intuition that we discover " – Henri Poincaré

PhDs

Citations

Publications

Research teams

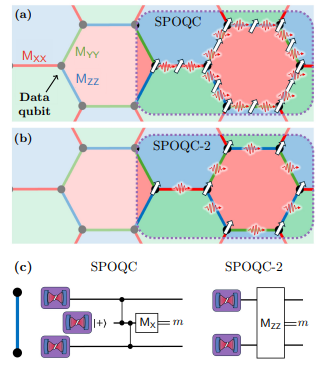

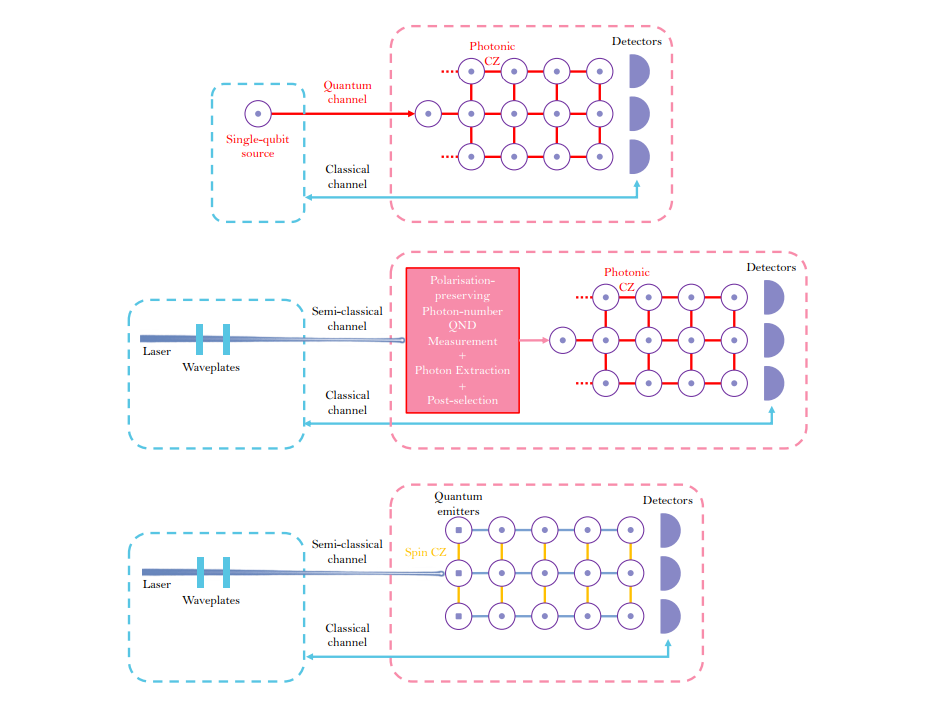

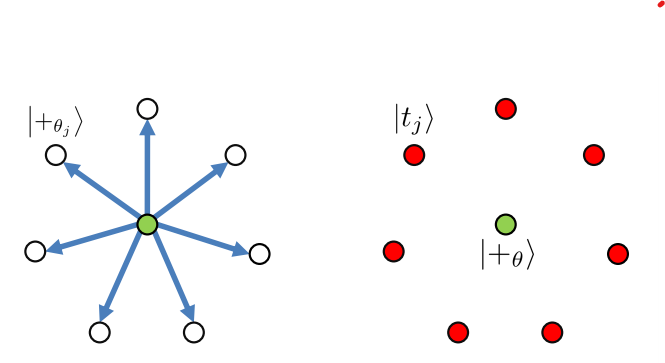

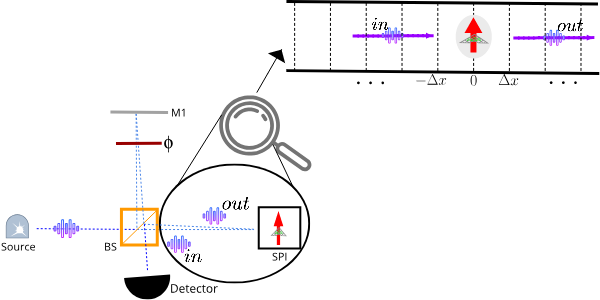

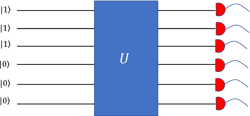

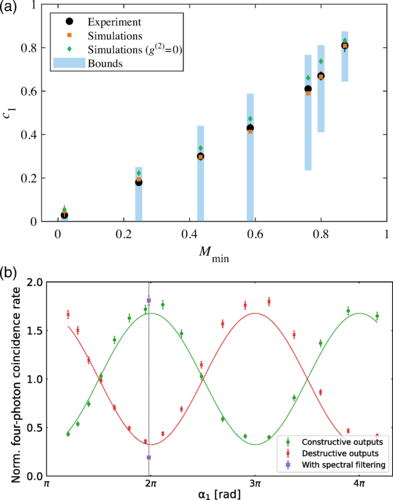

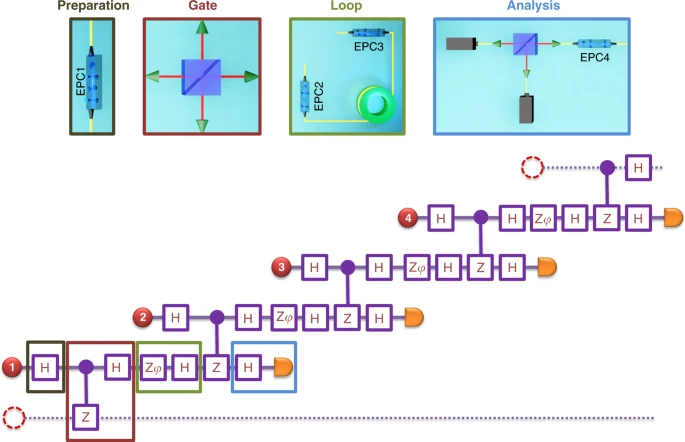

Monitoring the generation of photonic linear cluster states with partial measurements

×Photon-native quantum algorithms

×Indistinguishability of remote quantum dot-cavity single-photon sources

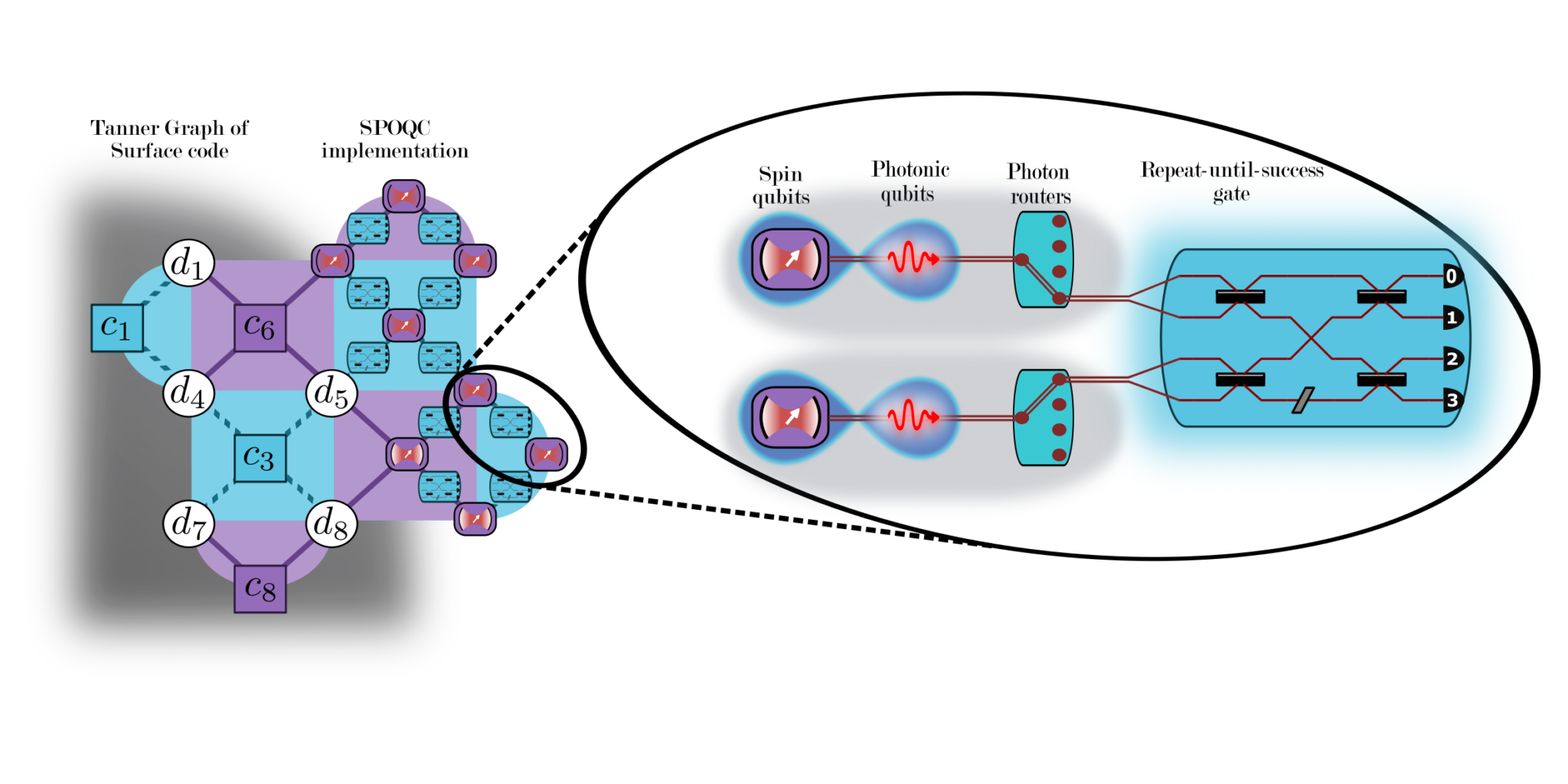

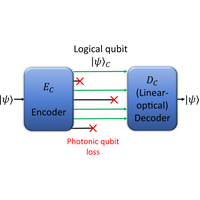

×Minimizing resource overhead in fusion-based quantum computation using hybrid spin-photon devices

×

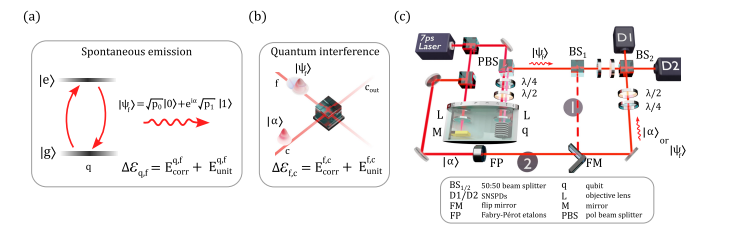

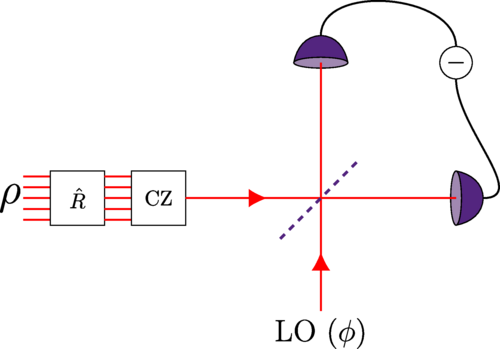

Quantum interferences and gates with emitter-based coherent photon sources

Quantum interferences and gates with emitter-based coherent photon sources

×Quantum circuit compression using qubit logic on qudits

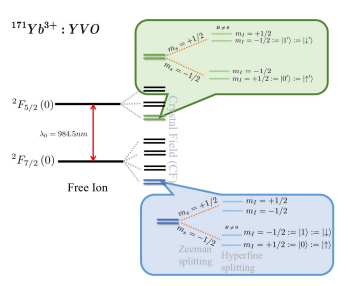

×Comparing the performance of practical two-qubit gates for individual Yb ions in yttrium orthovanadate

×Deterministic and reconfigurable graph state generation with a single solid-state quantum emitter

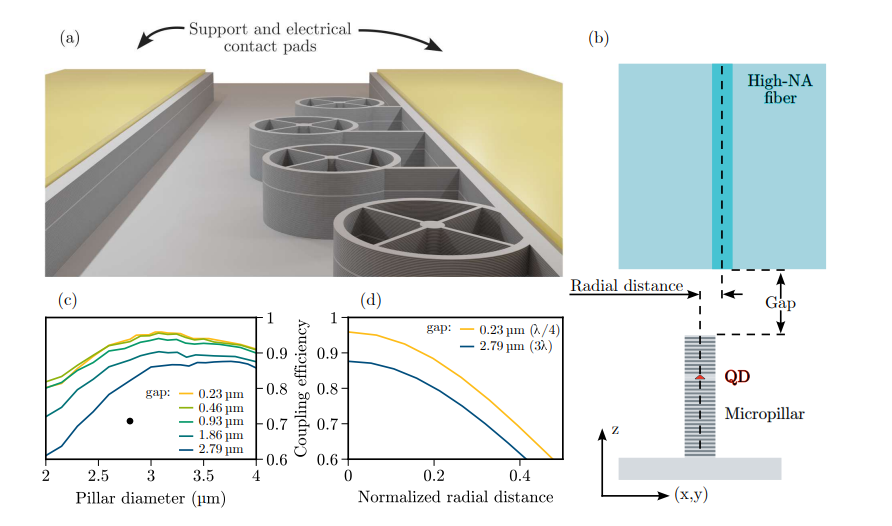

×Efficient fiber-pigtailed source of indistinguishable single photons

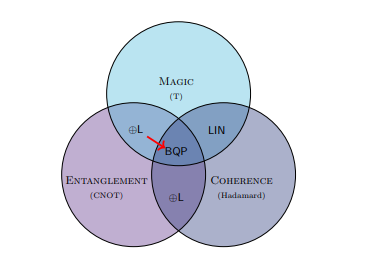

×On the role of coherence for quantum computational advantage

×Enhanced Fault-tolerance in Photonic Quantum Computing: Floquet Code Outperforms Surface Code in Tailored Architecture

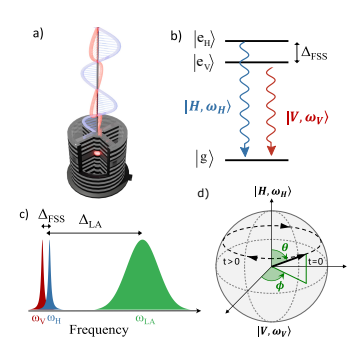

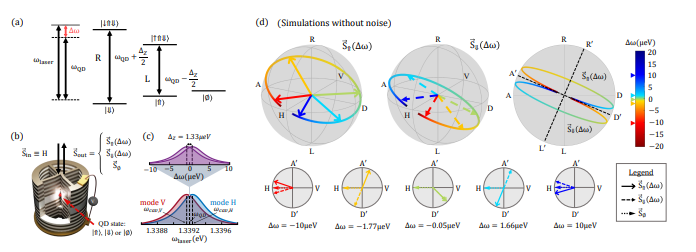

×A deterministic and efficient source of frequency-polarization hyper-encoded photonic qubits

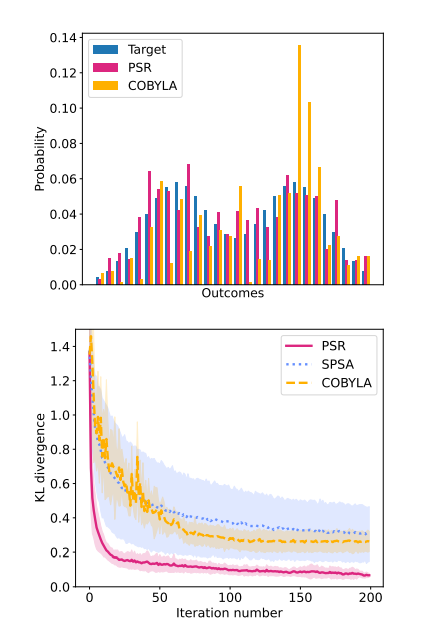

×A photonic parameter-shift rule: enabling gradient computation for photonic quantum computers

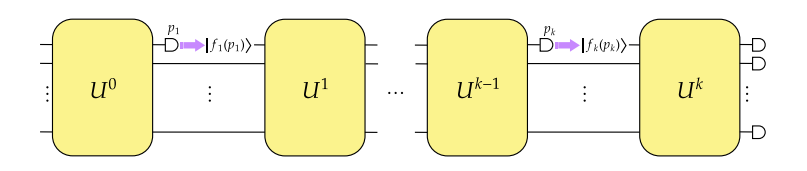

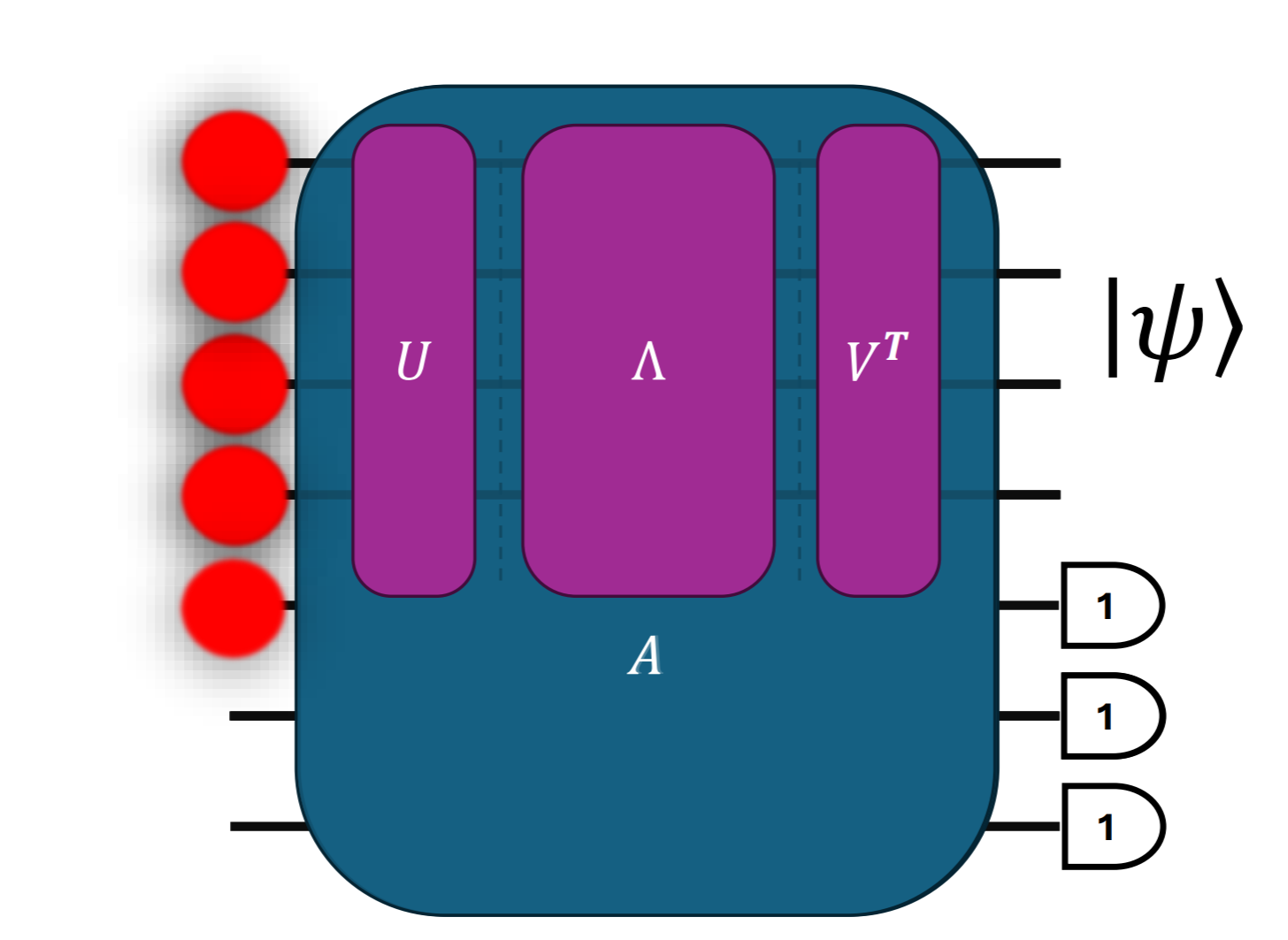

×Towards quantum advantage with photonic state injection

×Towards practical secure delegated quantum computing with semi-classical light

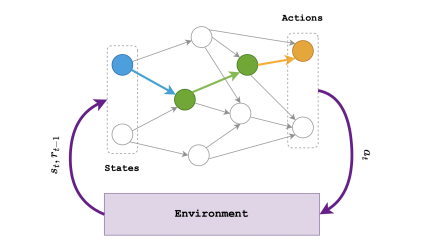

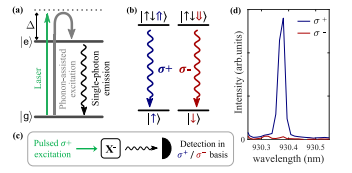

×Entanglement-enhanced Quantum Reinforcement Learning: an Application using Single-Photons

×Connecting quantum circuit amplitudes and matrix permanents through polynomials

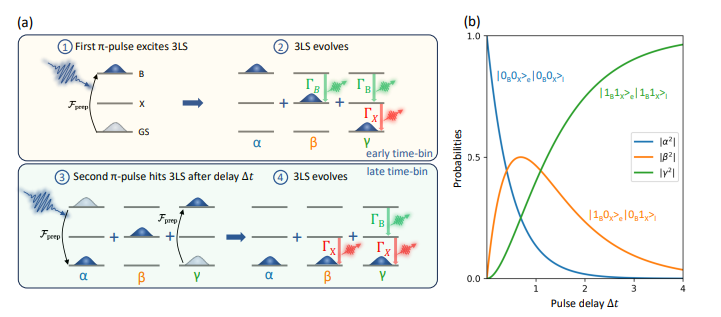

×Towards Photon-Number-Encoded High-dimensional Entanglement from a Sequentially Excited Quantum Three-Level System

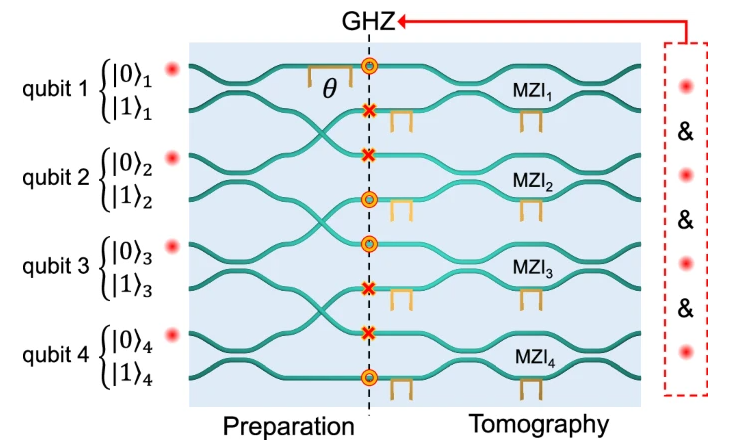

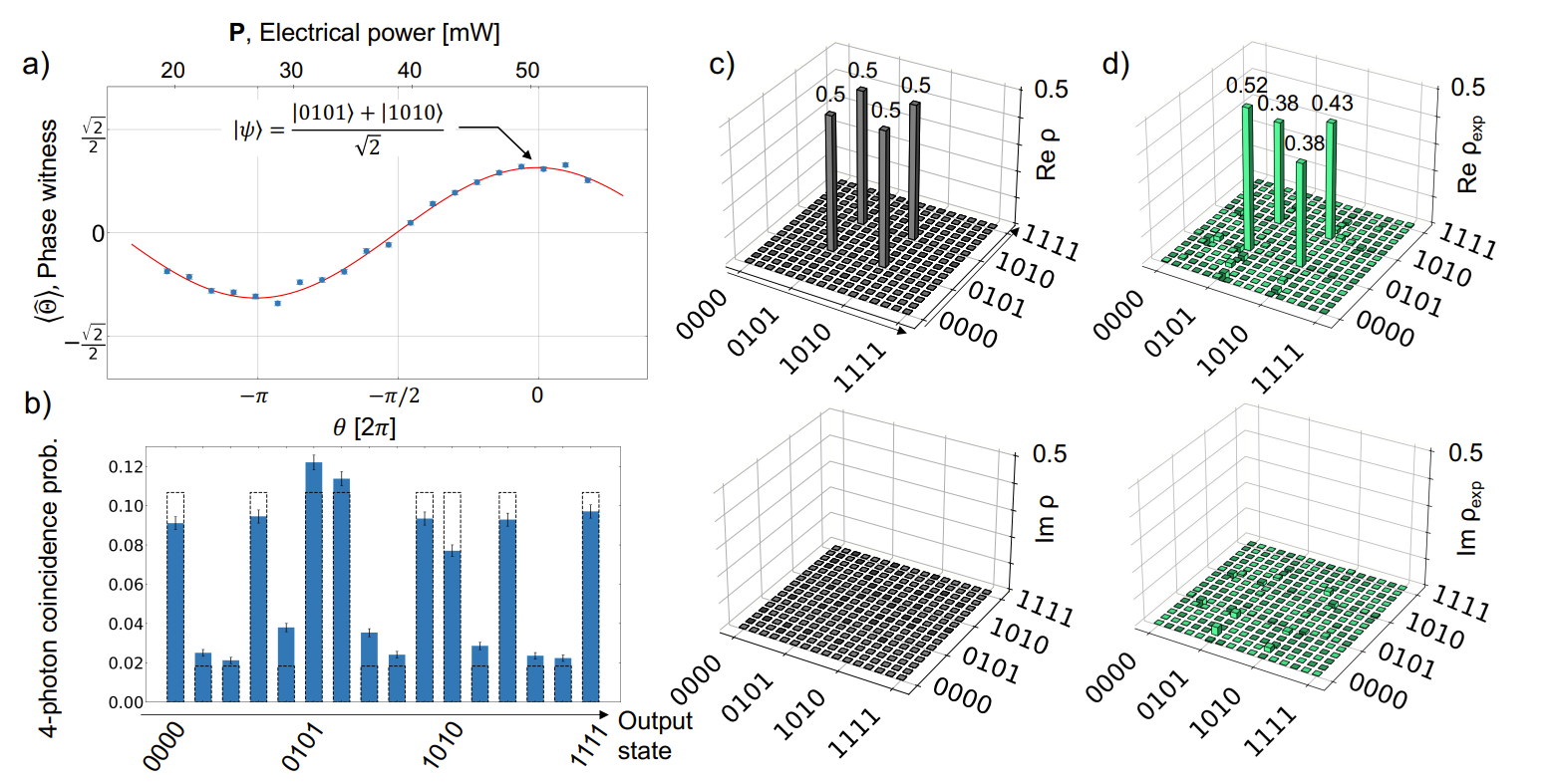

×High-fidelity four-photon GHZ states on chip

×Photonic quantum generative adversarial networks for classical data

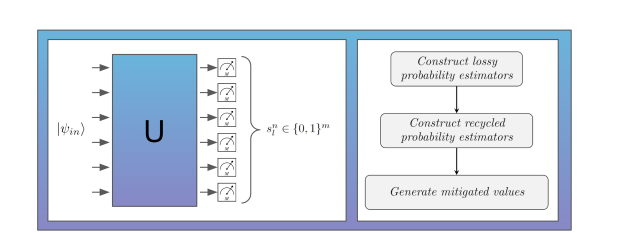

×Mitigating photon loss in linear optical quantum circuits: classical post processing methods outperforming post selection

×Simple rules for two-photon state preparation with linear optics

×Faster and shorter synthesis of Hamiltonian simulation circuits

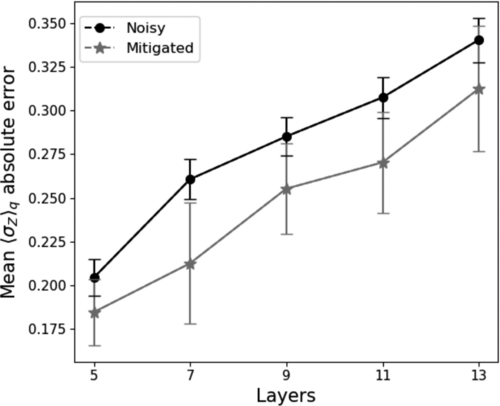

×An error-mitigated photonic quantum circuit Born machine

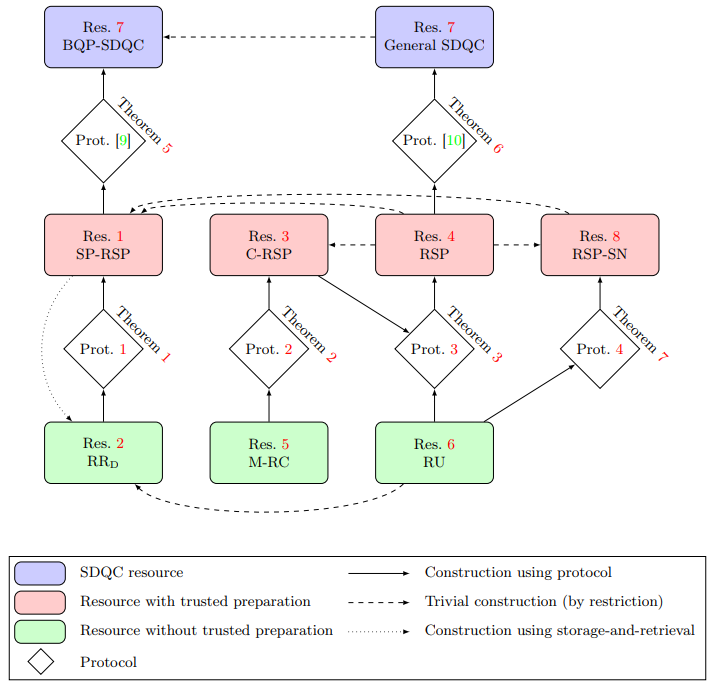

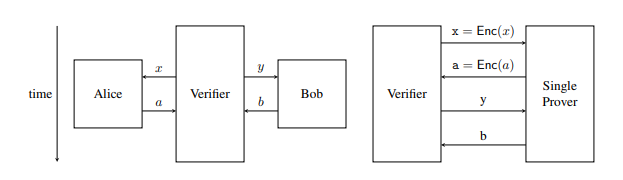

×Verification of Quantum Computations without Trusted Preparations or Measurements

×Four-photon GHZ states generated on a laser written integrated platform

×Quantum bounds for compiled XOR games and d-outcome CHSH games

×A Complete Graphical Language for Linear Optical Circuits with Finite-Photon-Number Sources and Detectors

×Spin Noise Spectroscopy of a Single Spin using Single Detected Photons

×Solid‐State Single‐Photon Sources: Recent Advances for Novel Quantum Materials

×Photonic quantum interference in the presence of coherence with vacuum

×A Spin-Optical Quantum Computing Architecture

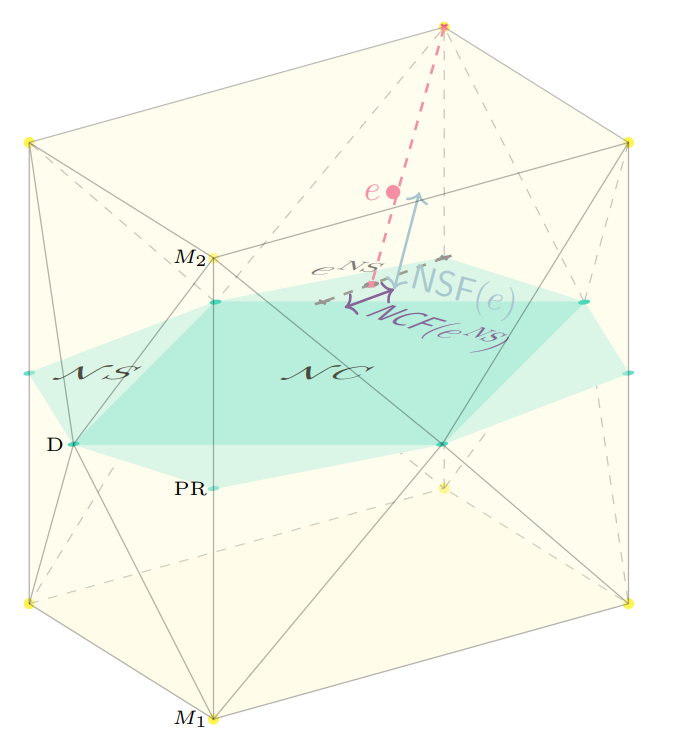

×Corrected Bell and Noncontextuality Inequalities for Realistic Experiments

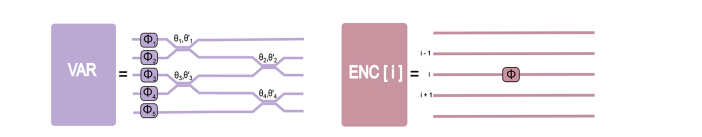

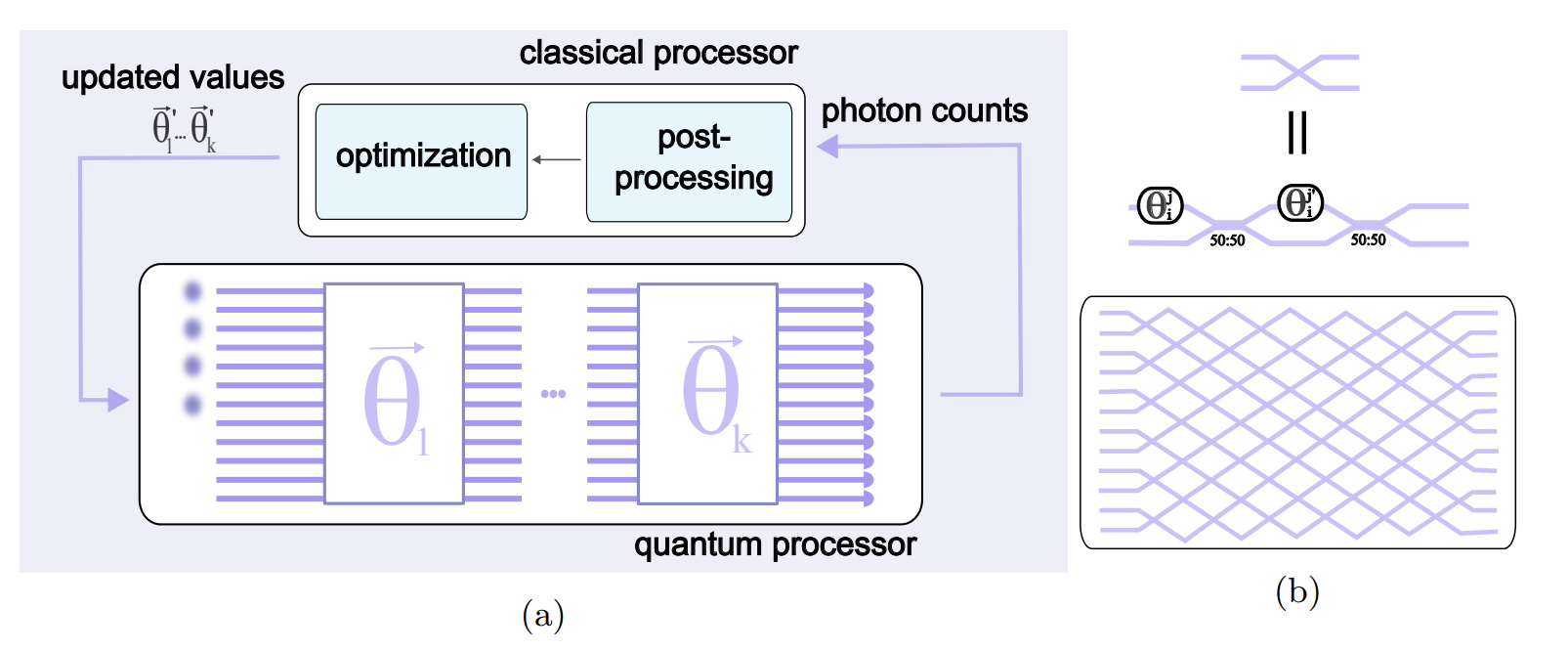

×Scalable machine learning-assisted clear-box characterization for optimally controlled photonic circuits

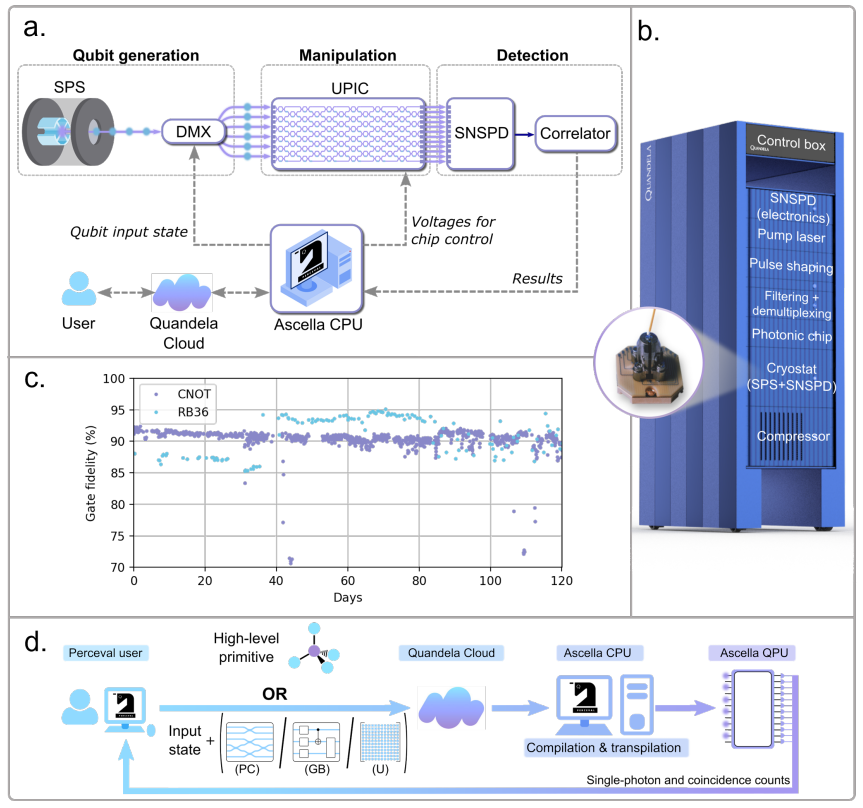

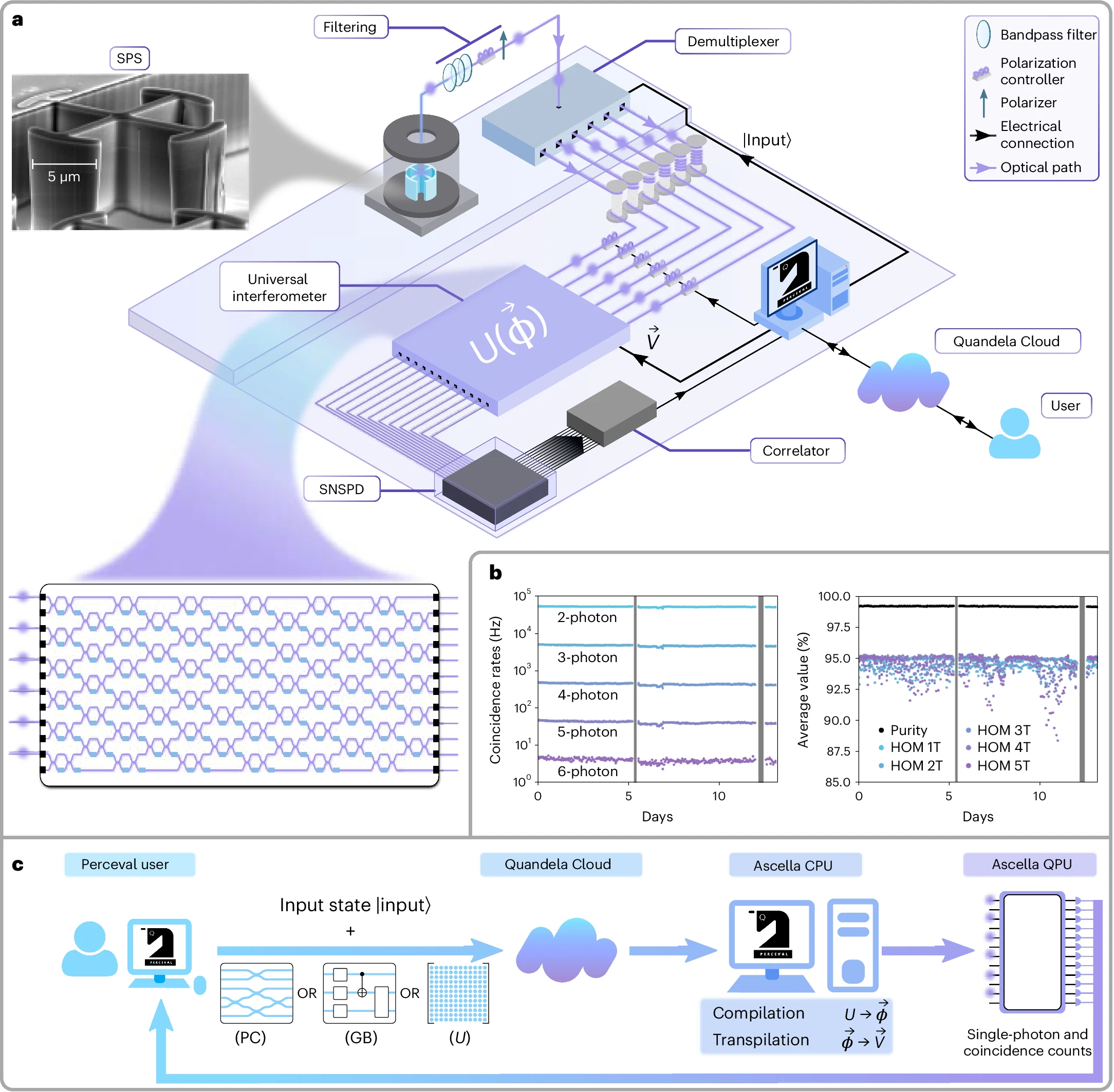

×One nine availability of a Photonic Quantum Computer on the Cloud toward HPC integration

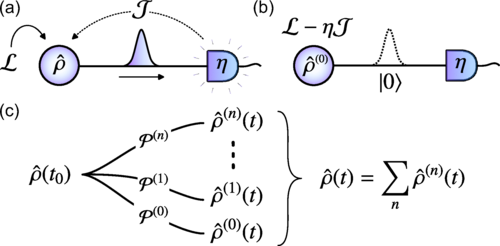

×Simulating photon counting from dynamic quantum emitters by exploiting zero-photon measurements

×Quantum error-correcting codes with a covariant encoding

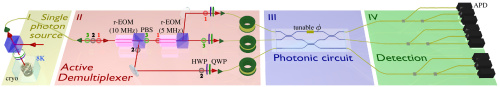

×A versatile single-photon-based quantum computing platform

×Asymmetric Quantum Secure Multi-Party Computation With Weak Clients Against Dishonest Majority

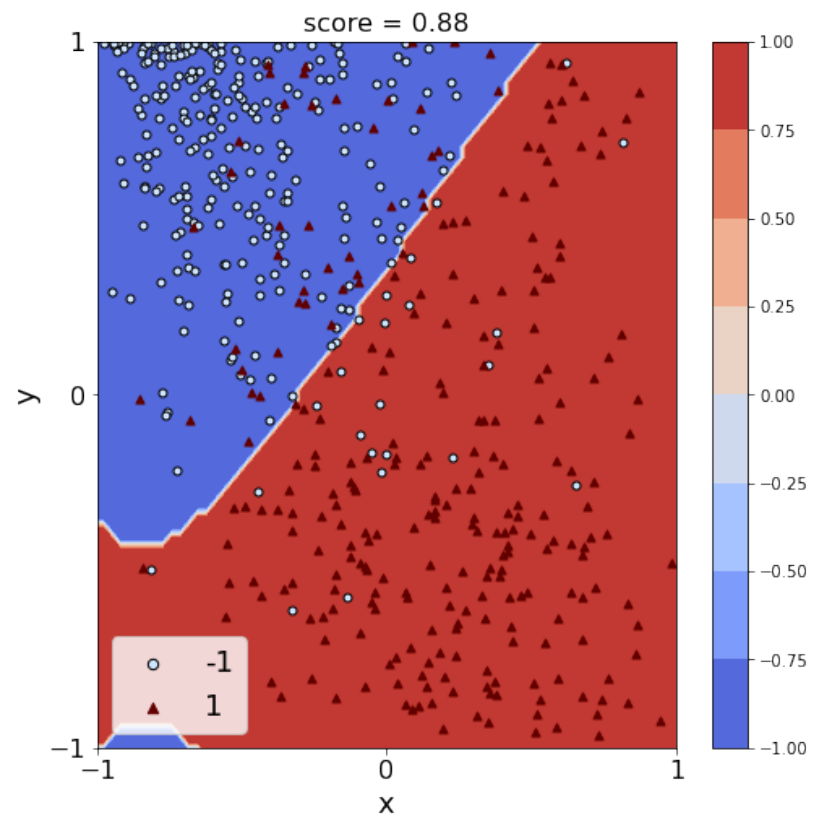

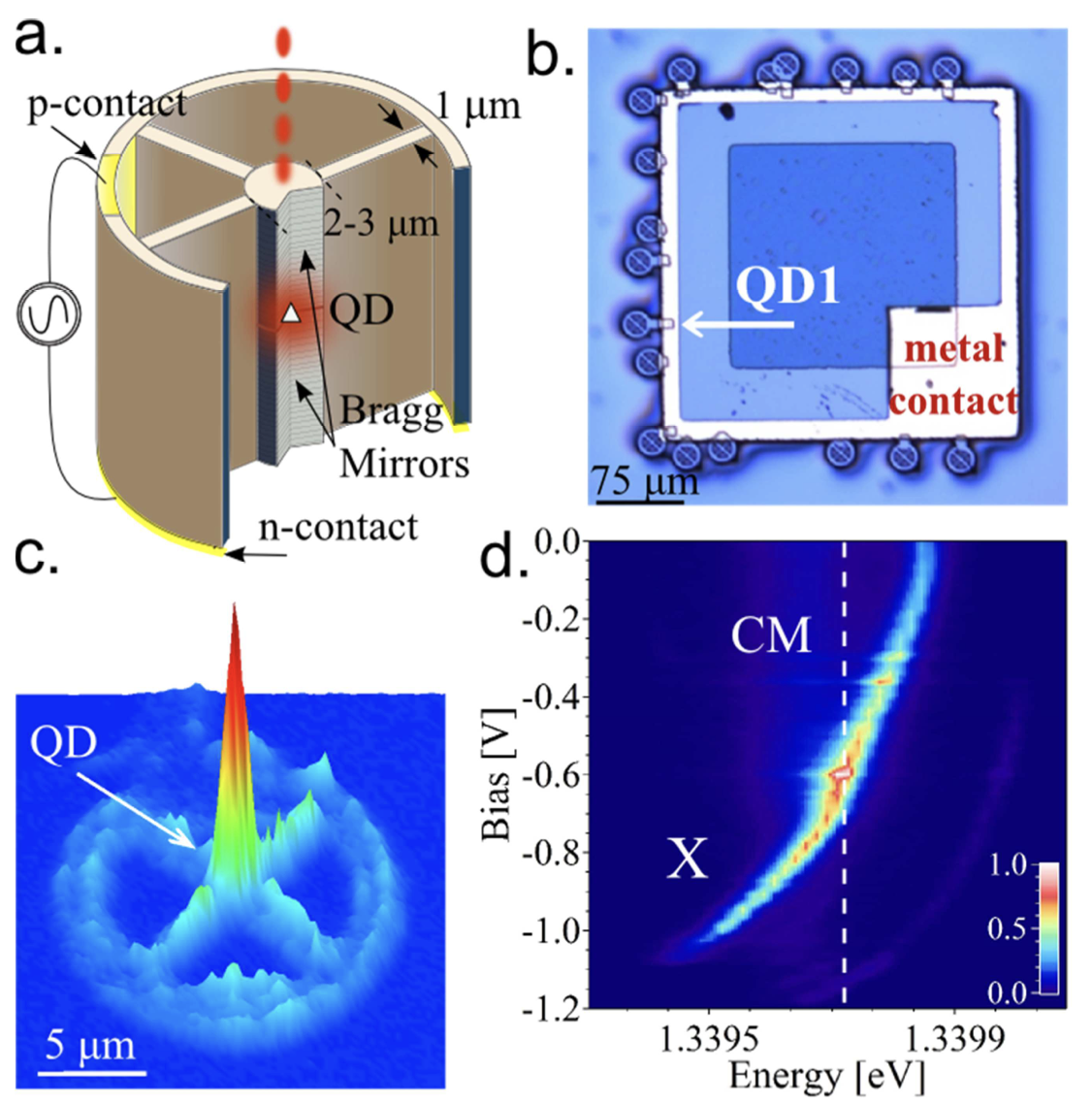

×Spin-photon entanglement and photonic cluster states generation with a semiconductor quantum dot in a cavity

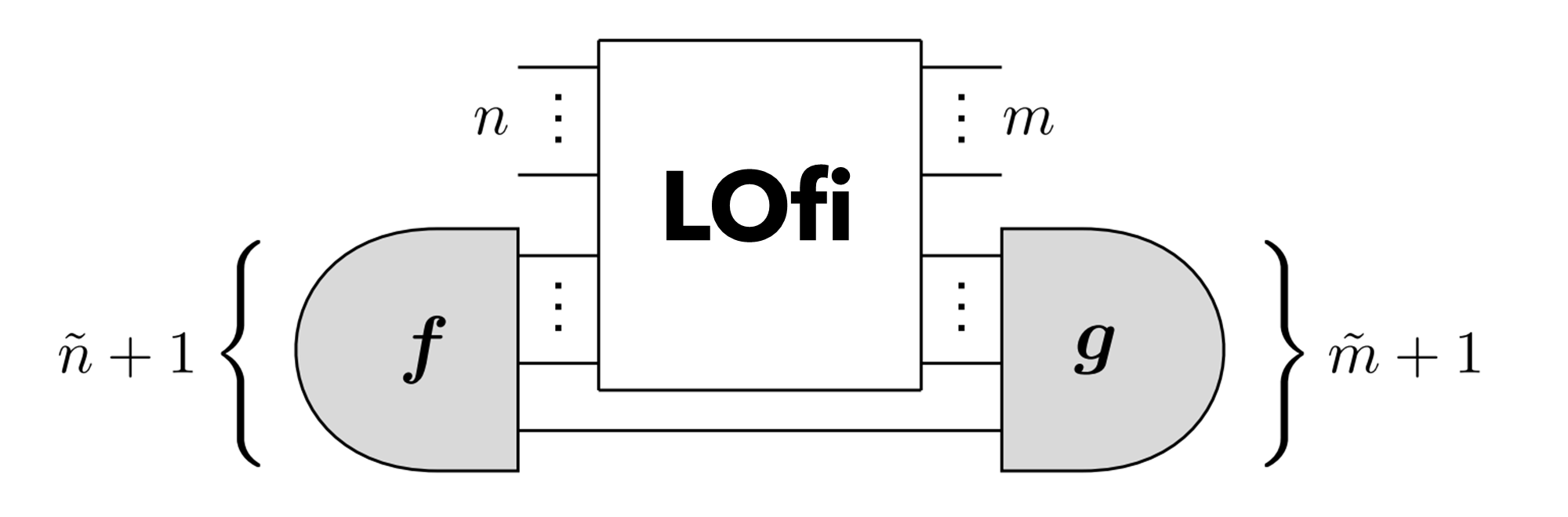

×Linear Optical Logical Bell State Measurements with Optimal Loss-Tolerance Threshold

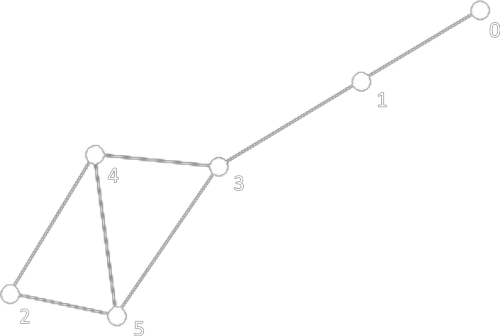

×Solving graph problems with single-photons and linear optics

×Certified randomness in tight space

×High-fidelity generation of four-photon GHZ states on-chip

×Photonic Quantum Computing For Polymer Classification

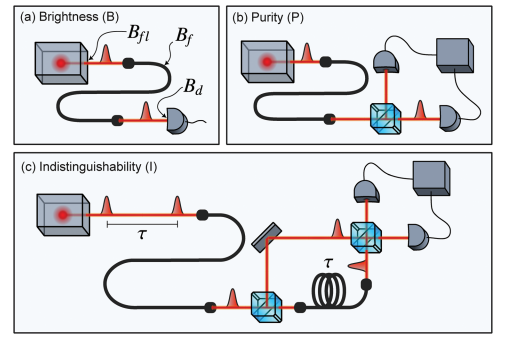

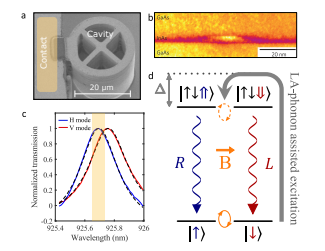

×High-rate entanglement between a semiconductor spin and indistinguishable photons

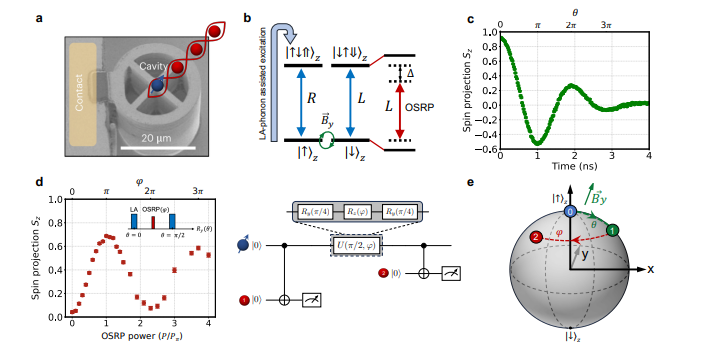

×Probing the dynamics and coherence of a semiconductor hole spin via acoustic phonon-assisted excitation

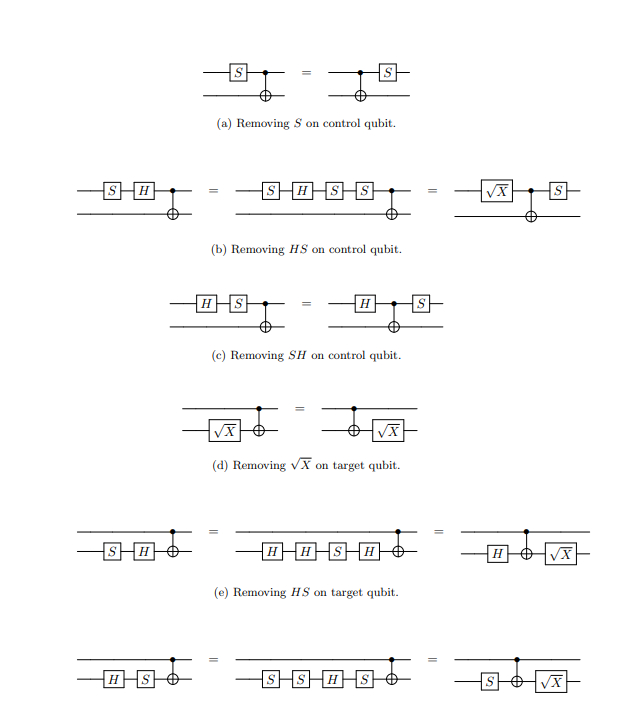

×A Complete Equational Theory for Quantum Circuits

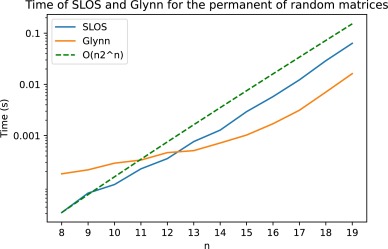

×Strong Simulation of Linear Optical Processes

×A Framework for Verifiable Blind Quantum Computation

×Energy-efficient quantum non-demolition measurement with a spin-photon interface

×LOv-Calculus: A Graphical Language for Linear Optical Quantum Circuits

×Quantum Advantage in Information Retrieval

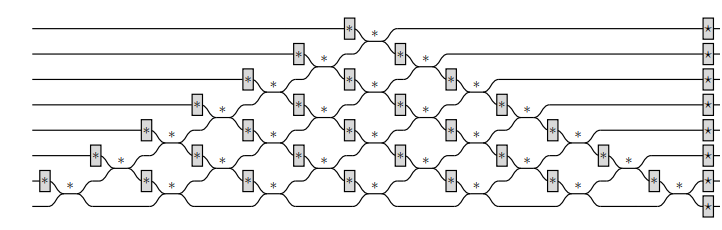

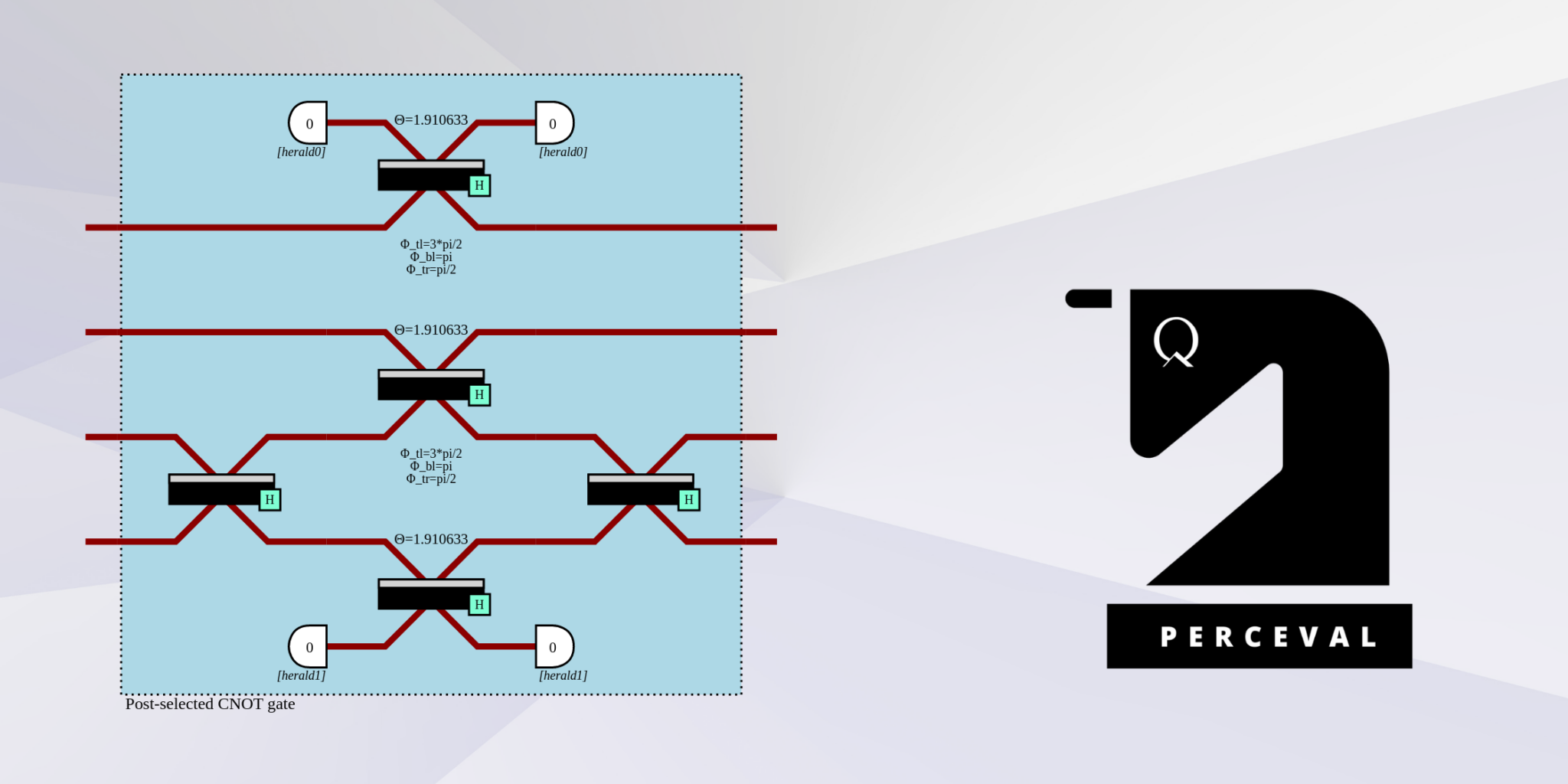

×Perceval: A Software Platform for Discrete Variable Photonic Quantum Computing

×Assessing the quality of near-term photonic quantum devices

×Experimental Analysis of Energy Transfers between a Quantum Emitter and Light Fields

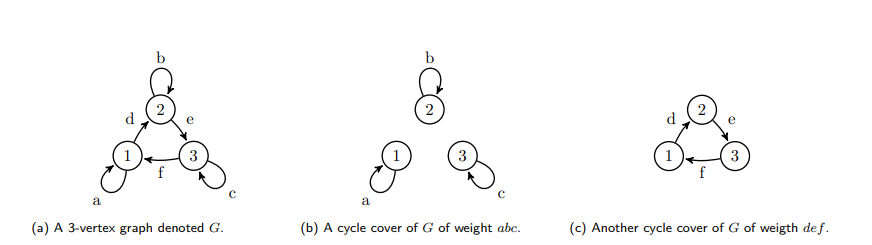

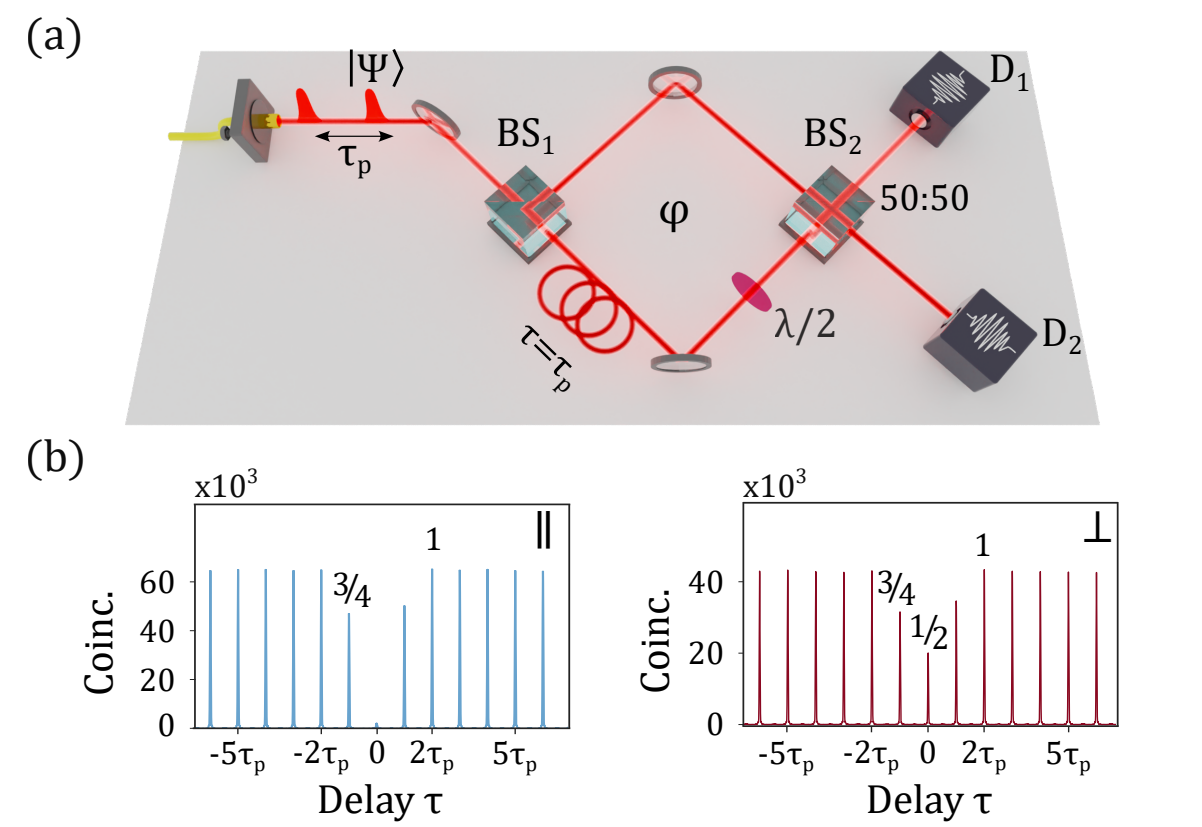

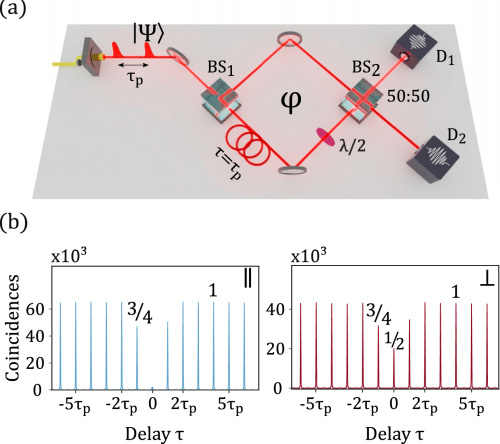

×Quantifying n-photon indistinguishability with a cyclic integrated interferometer

×Contextuality and Wigner Negativity Are Equivalent for Continuous-Variable Quantum Measurements

×Mitigating errors by quantum verification and post-selection

×Photon-number entanglement generated by sequential excitation of a two-level atom

×Bright Polarized Single-Photon Source Based on a Linear Dipole

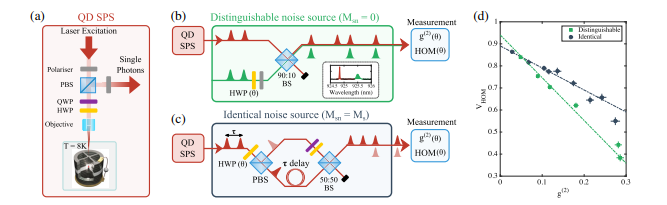

×Hong-Ou-Mandel Interference with Imperfect Single Photon Sources

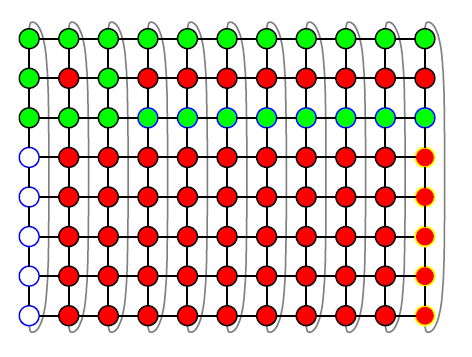

×Sequential generation of linear cluster states from a single photon emitter

×Generation of non-classical light in a photon-number superposition

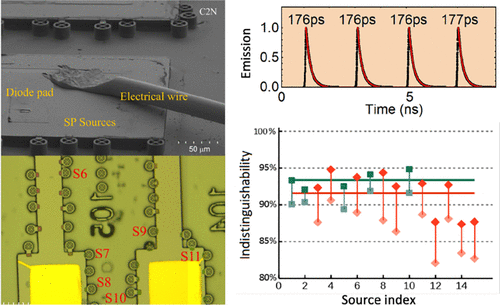

×Reproducibility of High-Performance Quantum Dot Single-Photon Sources

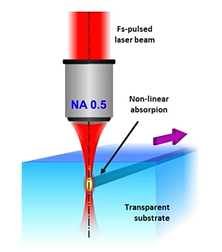

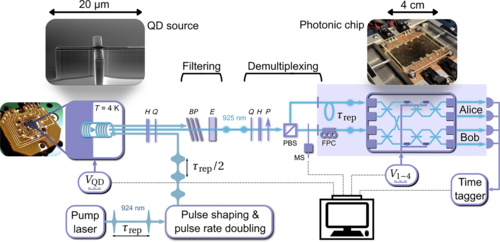

×Interfacing scalable photonic platforms: solid-state based multi-photon interference in a reconfigurable glass chip

×Near-optimal single-photon sources in the solid state

×Our research teams

Quandelians are a multidisciplinary, International and talented group of high-performing professionals who approach problems from different perspectives. We think Quandela is a unique place to work. Our team casts a wide net across many locations, lifestyles and backgrounds. Together we create a working environment that feels safe for everyone. We always welcome and respect individuality – reinforcing the importance of a diverse team that is nurtured to grow and evolve.

Research – Algorithms

The Algorithms Team develops, optimizes, and implements cutting-edge quantum algorithms tailored to Quandela’s hardware. They focus on driving applications and innovations in photonic quantum computing, bridging the gap between theoretical potential and practical implementation.

“Photonic quantum algorithms transform the potential of quantum mechanics into actionable insights, unlocking the power of photons to solve intricate challenges and illuminate the way for tomorrow’s quantum breakthroughs.”

Pierre-Emmanuel Emeriau – Head of Algorithms Team

Research – Device Theory

The Device Theory Team enhances device performance through rigorous theoretical models, computational simulations, error mitigation protocols, and benchmarking techniques. They apply advanced mathematics to refine experiments and push the boundaries of quantum device performance.

“Mathematics sharpens our understanding of physics, turning fundamental quantum laws into tools for advancing hardware, refining experiments, and pushing the boundaries of quantum device performance.”

Stephen Wein – Head of Device Theory Team

Research – Scalable Architecture

The Scalable Architecture Team investigates how to arrange and operate Quandela’s pioneering technology to perform large and trustworthy quantum computations. Their areas of expertise include compilation, delegated computing and quantum error correction.

“A good architecture is a bridge between complicated physics and abstract computer science. It orchestrates spins and photons to execute computations for as long as possible and on as many qubits as possible, both in the present pre-fault-tolerant world and in the error-corrected future.”

Boris Bourdoncle – Head of Scalable Architecture Team

Research – Semiconductors

The Research Semiconductors Team focuses on developing innovative approaches to optimize semiconductor single-photon source performance. They explore novel source designs and advanced addressing schemes, aiming to produce high-performance single-photon sources at a large scale for fault-tolerant photonic quantum computing systems.

“Our goal is to pursue new methods for creating large entangled states of photons with high probability and fidelity. As a key part of the joint laboratory formed by Quandela and the C2N, the team also serves as an important connection between Quandela and the academic GOSS group led by Pascale Senellart.”

Sébastien Boissier – Head of Research Semiconductors Team

Quantum Applications

The Quantum Applications Team bridges the gap between industry use-cases, state-of-the-art quantum computing algorithms, and product integration. They develop trust in photonic quantum applications for real-world problems by designing and enhancing cutting-edge algorithms, supporting clients in their quantum transformation journey.

“We support our clients in their quantum transformation. We give classes about photonic quantum computing, identify use-cases in the client’s industry and develop proof-of-concept that then evolve in an in-production algorithm. All those ingredients are crucial for big companies to make their quantum transformation successful.”

Arno Ricou – Head of Quantum Applications Team

Products – Optics, Electronics and QPU Assembling

The Team develops cutting-edge optical systems and optimal control methods for photonic quantum computing. They integrate and assemble these components to create MosaiQ – data center-ready quantum processing units. The team’s work is crucial in advancing the scalability and efficiency of photonic quantum computers.

“The scalability of photonic quantum computers hinges on our ability to manufacture complex opto-electronic systems. As we push the boundaries of photonic integrated circuits fabrication, we’re not just scaling up qubit numbers, but also enhancing the precision and efficiency of quantum operations. The transition to large-scale manufacturing of these intricate systems will be the key to unlocking the full potential of photonic quantum computing.”

Nicolas Maring – Head of Optics, Electronics and QPU assembling Team

Products – Semiconductor Sources Production

The Semiconductor Sources Production team produces one of the world’s brightest quantum single-photon sources using cutting-edge nanofabrication technologies. In each quantum computer, their source serves as the heart, delivering single photons through the fiber channels and circuits of the QPU. Just as there’s no substitute for a heart, their photon source is irreplaceable!

“Competing and embracing yourself every day is the art of making everything better !”

Thi Huong Au – Head of Semiconductor Sources Production Team

Software

The Software Engineer Team develops the quantum computing framework Perceval and manages Quandela’s web and cloud infrastructure. They play a dual role: leading software production with regular Perceval releases and new customer APIs, while also providing internal support to other teams through tool development and code reviews.

“People imagine that driving a quantum computer is something extremely complicated, and this is partly true. In our team, we’re in the business of transforming this into “just” a complex operation, with each element simplified as far as possible. This involves using Python, then sending a computation order via the web to our quantum computers or optimized simulators.”

Mario Valdivia – Head of Software Engineer Team