Benchmarking whether many photons are truly indistinguishable is essential for scaling photonic quantum hardware, but existing methods become impractical as photon number grows. In this work, we introduce a protocol that estimates genuine n-photon indistinguishability with constant (or near-constant) sampling cost—an exponential improvement over the prior state of the art. We show how the symmetry of a quantum Fourier transform interferometer can be used to construct an efficient, hardware-friendly benchmark, and demonstrate its performance on Quandela’s photonic processor.

Introduction

Indistinguishable photons are a key resource for photonic quantum technologies, enabling multi-photon interference. Being able to distinguish between photons, even slightly, will limit the performance of photonic quantum devices and algorithms. Therefore, reaching high levels of indistinguishability is crucial to achieving quantum advantage in photonic platforms.

For two photons, there is a well-known experimental observation called the Hong–Ou–Mandel (HOM) dip that provides a simple and efficient benchmark of how indistinguishable they are. As soon as you move beyond two photons, “how indistinguishable are my photons?” becomes much harder to answer in a way that scales efficiently.

For multiple photons the key metric is genuine n-photon indistinguishability (GI): the probability that the entire n-photon state is made up of fully indistinguishable photons. GI is strongly tied to scalability—yet the only exact protocol previously available required measurement statistics of extremely rare events, meaning an exponential number of experimental runs is needed as n increases.

In this article, we introduce a new approach that removes this exponential barrier: a benchmarking protocol, that uses a quantum Fourier transform interferometer, which makes GI measurable with constant sample complexity for prime photon numbers, and with sub-polynomial scaling otherwise.

Why previous approaches to benchmarking multiphoton indistinguishability do not scale

Two-photon tests can look “good” even when the full n-photon state is not, and projecting the values from these tests to n photons does not capture the true level of indistinguishability. Whereas GI captures whether the whole experiment is genuinely behaving as a fully indistinguishable multi-photon interference process.

The previous exact method for measuring GI is based on a particular set-up called a cyclic interferometer (CI), that orders the experimental apparatus in such a way that the outputs can be related to GI. It works by selecting a specific family of outputs that behave identically for any input that contains at least one distinguishable photon. This allows GI to be extracted from the observed output pattern.

The downside is that the chosen family of outputs are extremely unlikely. As a result, estimating GI to a fixed precision requires an exponentially increasing number of samples. This exponential overhead severely limits scalability beyond a few photons.

A QFT-based benchmark for genuine n-photon indistinguishability

Why the quantum Fourier transform (QFT)?

Our protocol uses an n-mode quantum Fourier transform (QFT) interferometer with one photon injected into each input mode. A QFT interferometer is a highly symmetric optical network that makes quantum interference patterns especially clean and structured. For fully indistinguishable photons, the QFT exhibits strong suppression laws where entire classes of output events are forbidden. This begs the questions: Would the QFT allow small differences between photons to be seen through the output events that are observed? It turns out that this is indeed the case!

How partial distinguishability populates “suppressed” output events

A central technical insight of this work is that when the input state is not fully indistinguishable, many of these “suppressed” outputs become populated. Most importantly, they do so in a uniform and predictable way, determined by the structure of distinguishability in the input state. This leads to particular outputs that appear much more frequently and allow measurement of GI.

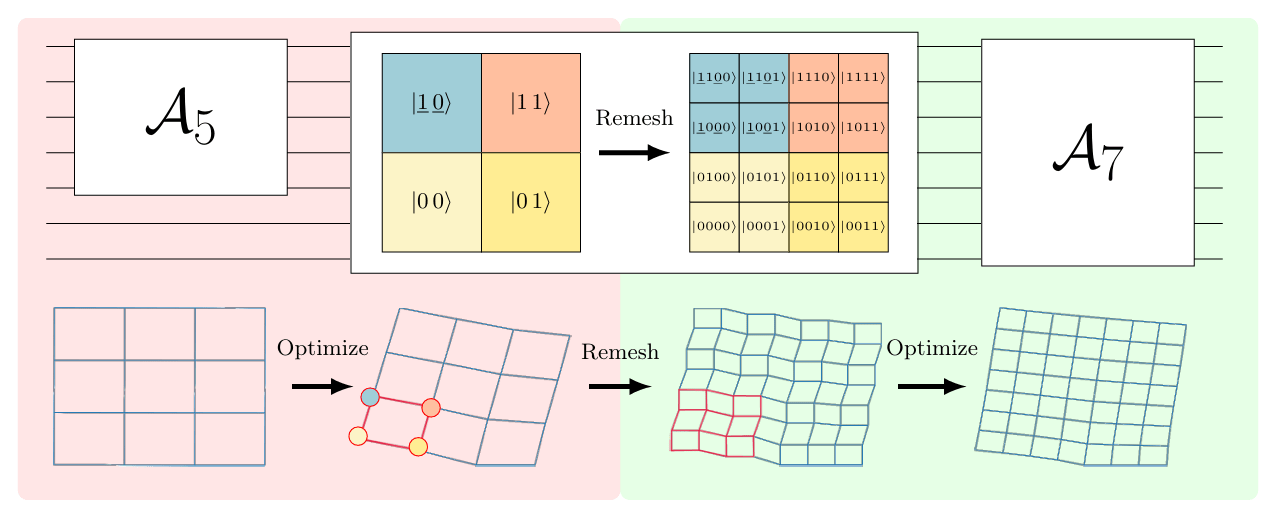

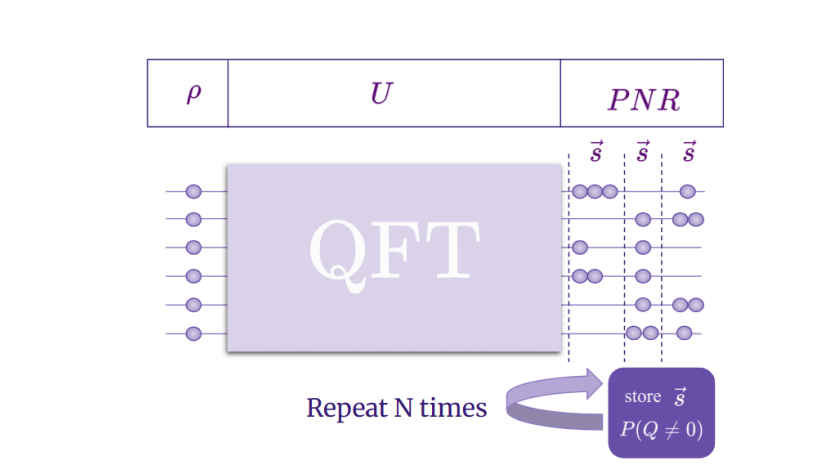

Operationally, the protocol estimates probabilities associated with specific classes of output configurations, characterized by a simple quantity derived from the detected pattern. These probabilities are then mapped directly to the value of GI.

Protocol overview. An n-photon state is sent through an n-mode QFT interferometer and specific output configurations are used to estimate GI.

Exponentially improved scaling and experimental validation

For a prime number of photons, this mapping is especially straightforward, so that the number of experimental runs needed to reach a given accuracy does not increase with system size (constant sampling cost). The paper further proves that this scaling is optimal: no linear-optical strategy can achieve a better scaling in this case.

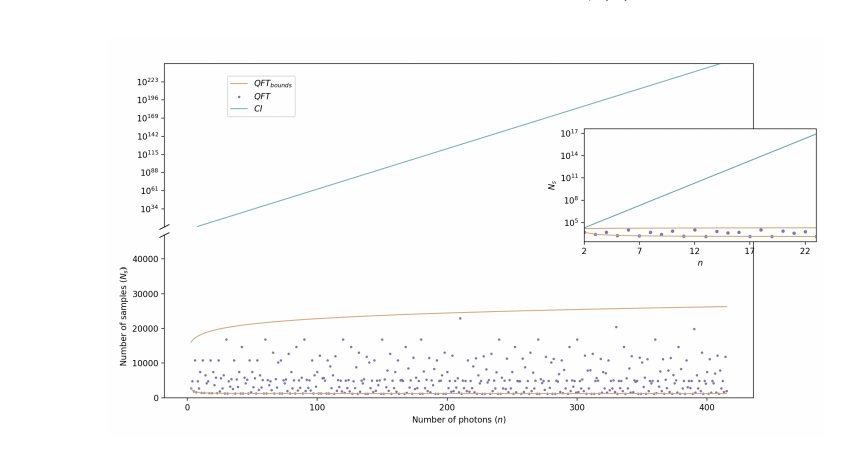

Sampling requirements to estimate GI: the cyclic interferometer scales exponentially, while the QFT protocol achieves constant scaling for prime n and sub-polynomial scaling otherwise.

For non-prime photon numbers, a small amount of additional classical data processing is required, but the overall cost still grows very slowly compared with previous methods (sub-polynomial scaling).

Experimental comparison on Quandela’s photonic quantum processor

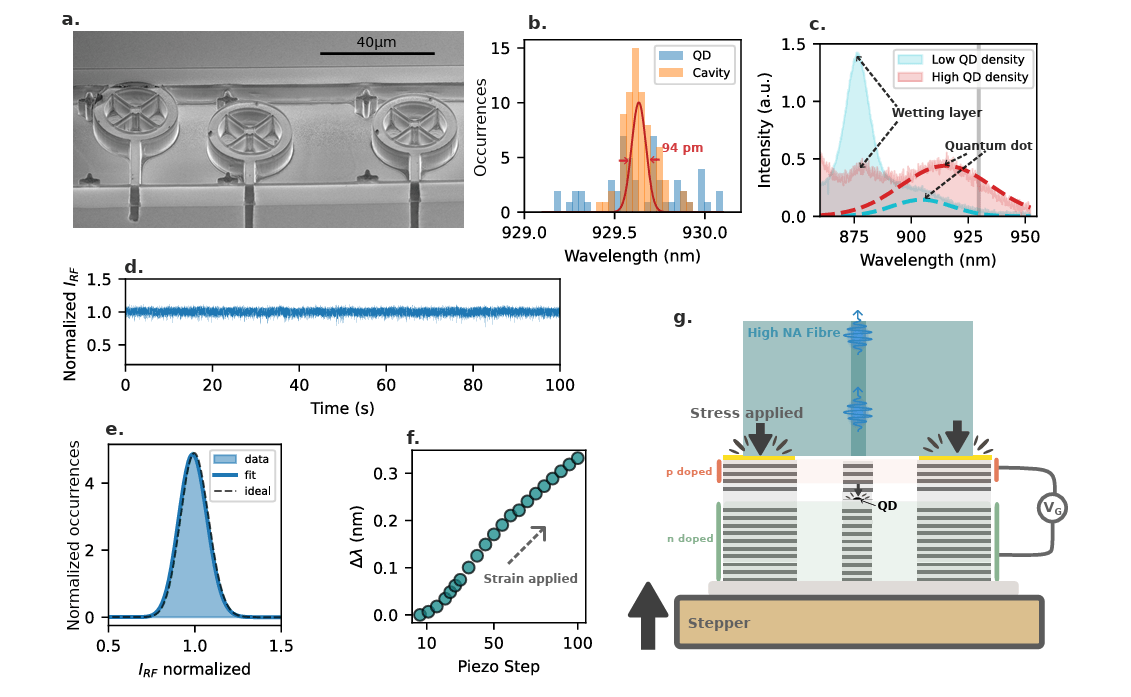

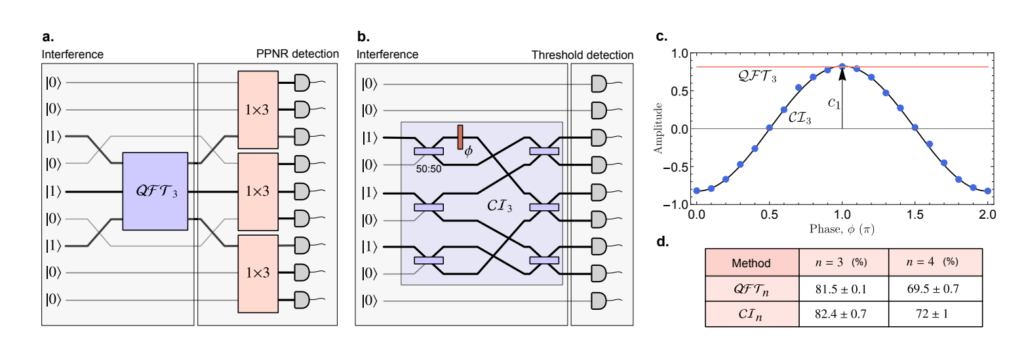

The protocol was implemented experimentally on Quandela’s reconfigurable photonic quantum processor, and benchmarked against the cyclic interferometer approach. Experiments were performed for n = 3 and n = 4 photons. The QFT protocol requires photon number resolving detectors, i.e. detectors that count how many photons are in each mode. At Quandela, the threshold detectors tell us if a photon is present or not, but not how many. Therefore, for the experiment the circuit was manipulated to implement pseudo photon-number resolving detection (PPNR).

Experimental validation for n = 3 and n = 4 photons, comparing measured GI, runtime, and precision for QFT and cyclic interferometer protocols.

Even at these small system sizes, and using PPNR, the QFT-based protocol delivered a more precise estimate of GI with a significantly lower total runtime.

Key takeaways

- Genuine n-photon indistinguishability (GI) is a key scalability metric for photonic quantum experiments.

- Previous exact GI benchmarks suffer from exponential sampling cost, limiting practical scalability.

- A QFT interferometer creates suppressed output classes for indistinguishable photons, while partial distinguishability populates them predictably.

- The new protocol estimates GI with constant sample complexity for prime photon numbers and sub-polynomial scaling otherwise.

- Experiments on Quandela hardware demonstrate better runtime and precision than the cyclic interferometer approach.

Impact on the field

A practical, scalable benchmark for multiphoton indistinguishability

As photonic quantum hardware scales, benchmarking tools must scale with it. We introduce the first scalable protocol for directly estimating genuine multiphoton indistinguishability (GI). Already at around 20 photons, it achieves orders-of-magnitude reductions in sampling cost compared to existing cyclic-interferometer approaches. Therefore, it would be a suitable standard benchmark for near-term and future photonic quantum processors.

Enabling reliable and fault-tolerant photonic quantum processing

By placing GI on a similar operational footing to standard qubit benchmarks, the protocol provides a concrete route to studying how much multiphoton indistinguishability is required for reliable photonic quantum information processing. This includes the implementation of linear-optical multi-qubit gates and the preparation of high-fidelity multi-photon resource states for fault-tolerant quantum computation.

Faster characterization and more realistic characterization of noisy photonic hardware

The large reduction in required experimental samples directly shortens measurement times and enables faster feedback when tuning photon sources and interferometers. In addition, many existing classical simulation and validation methods for noisy boson-sampling experiments rely on simplified assumptions about photon distinguishability. By enabling efficient and direct estimation of GI across a wide range of multi-photon states, the protocol supports more accurate modelling and validation of realistic photonic quantum experiments.

References

- R. M. Sanz et al., “Exponential improvement in benchmarking multiphoton interference”, arXiv:2601.10289 (2026).

- M. Pont et al., “Quantifying n-photon indistinguishability with a cyclic integrated interferometer”, Physical Review X 12, 031033 (2022).

- M. C. Tichy et al., “Zero transmission law for multiport beam splitters”, Phys. Rev. Lett. 104, 220405 (2010).